Prequel's Detection Engine: The Architecture Behind the Signal

By Tony Meehan, Co-Founder & CTO

The dominant model for detection, whether you're monitoring infrastructure reliability or investigating security incidents, is store first, ask questions later. Ship your logs, metrics, and events to a central store. Index everything. Query against it.

That model made sense when infrastructure was simpler. But it's breaking down now. The cost and effort of ingesting and storing every event at enterprise scale has become a real burden. The lag introduced by data pipelines is too long for modern incident response. And the telemetry that matters most for detection (continuous host activity, kernel events, high-frequency process data) is precisely what's most expensive to move and duplicate.

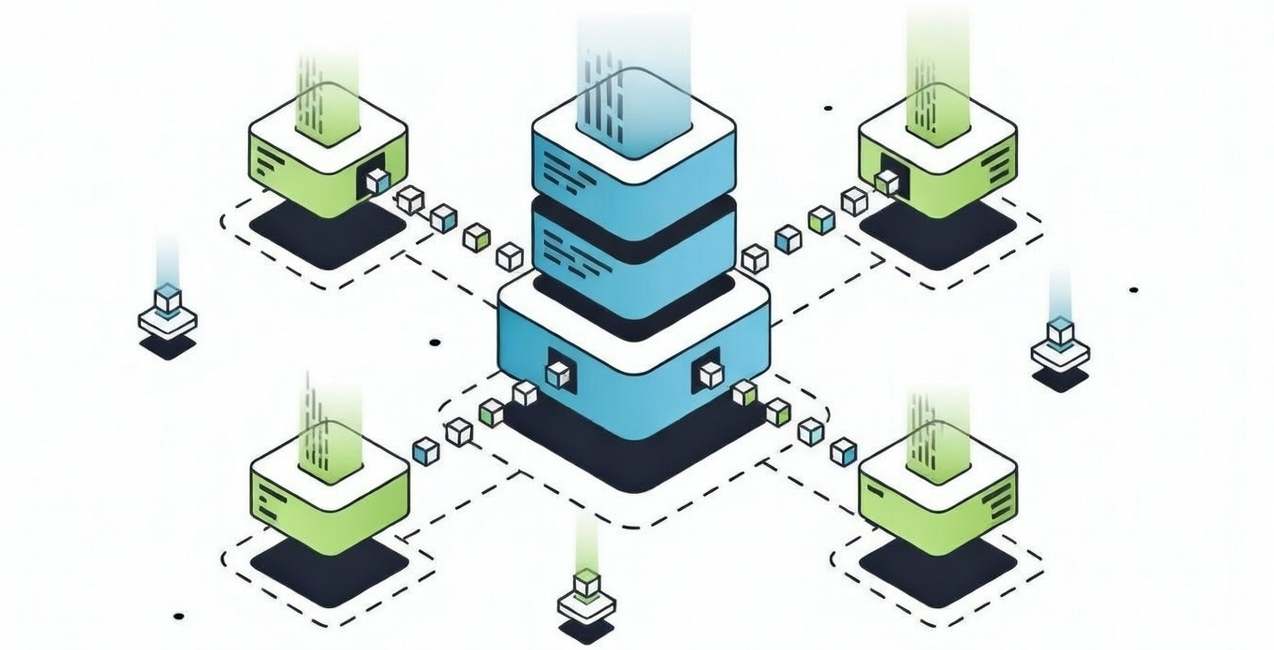

Prequel built its detection engine on a different bet: bring the rules to the data, not the data to the rules.

The Architectural Thesis

Prequel runs detection logic where events are generated, on the hosts, nodes, cloud managed services, and containers being monitored. Distributed pattern matching happens before data moves anywhere. When something significant is found, a small structured signal propagates up the distributed pipeline. The engine simultaneously snapshots the surrounding context at the moment of detection, capturing the data needed for correlation analysis and giving investigators the full picture around the signal without requiring them to reconstruct it from raw streams after the fact. Raw telemetry stays where it is captured

The advantages compound. Data volumes that would be prohibitively expensive to centralize (kernel-level activity, verbose application logs, packet-level network events) are fully in scope for detection, because the engine never has to move them. Detection latency drops from minutes to milliseconds, because the rules are co-located with the source. Scaling the fleet scales detection capacity automatically, because every node brings its own evaluation power with it.

This is the same architectural conclusion that the next generation of security analytics platforms will converge on. The SIEM-as-data-warehouse model is under pressure, not just from cost but from the recognition that the most important signals are the ones most expensive to centralize. Prequel's engine is purpose-built on that foundation, applied across the full stack: logs, metrics, process activity, kernel events, Kubernetes state, network telemetry, and external observability platforms.

Reliability, Security, and the Space Between

The Prequel engine is domain-agnostic. It evaluates conditions against data streams and fires when they match, without caring whether a pattern represents an infrastructure failure or an active attack.

For reliability and performance, this means detecting failure sequences earlier in the chain. Cascading failures, resource exhaustion, configuration drift causing degradation: these are patterns that precede an outage, not symptoms of one. Threshold-based alerting fires after something has already gone wrong. Multi-signal correlation fires on the sequence of conditions that leads there. The difference is whether engineering teams are responding to incidents or preventing them.

For security, the distributed architecture is a structural advantage. Container escapes, lateral movement, privilege escalation chains, anomalous deployment behavior: the highest-fidelity detection signals are high-volume host-level events. These are exactly what traditional SIEMs struggle to ingest cost-effectively, which is why so many security teams end up with gaps in their coverage by design. Running detection at the host means those signals are in scope without the ingestion bill.

The same engine handles both. A single rule can describe a pattern that begins with an infrastructure event and ends with a security indicator, or vice versa. The boundary between reliability and security is often exactly where the most important signals live.

Detection Across Every System You Run

Failures and threats rarely confine themselves to a single system. The sequence that matters is distributed simultaneously across your logs, your metrics platform, your cloud provider, and your security tooling.

The engine correlates across all of it. Rules pull signal from container and host logs, Kubernetes resource state, infrastructure metrics, process activity, and external platforms including CloudWatch, Datadog, Grafana Cloud, Google Cloud Monitoring, your SIEM, and third-party status pages, all in the same detection window. External sources are first-class: queried at detection time, correlated with local signals, without centralizing either.

In practice, this looks like:

- A rule that fires when a CloudWatch metric on a managed database instance spikes and the application connecting to it logs a specific error pattern, correlating cloud infrastructure signals with application behavior across systems that currently have no shared view.

- A rule that catches the degradation sequence combining a Datadog APM latency spike with a Kubernetes pod restart on the same service, neither of which is meaningful alone but together signals a specific failure mode.

- A security rule that correlates failed authentication events from your SIEM with privilege escalation activity in live process data on the affected host, connecting centralized security logging with real-time host telemetry.

- A rule that suppresses spurious alerts when an upstream dependency's public status page reports degraded state, so on-call engineers aren't paged for a third-party outage you can't control.

Each of these crosses tool boundaries that currently require a human to bridge manually during an incident. The engine bridges them automatically, before the escalation.

This is source-level search: the ability to run detection and investigation queries against data wherever it lives, across on-prem, cloud, and hybrid deployments, without moving it or rewriting rules per platform. Reliability monitoring and threat investigation share the same capability. Every data source in your environment becomes searchable on its own terms, and you can discover what you need to search against before you know you need it.

The Economics of Distributed Detection

Distributed detection changes the cost structure at every level.

What moves through the pipeline is filtered signal, not raw data. A service generating gigabytes of logs per hour might produce a handful of match messages per minute: the confirmed detections, stripped of everything irrelevant. Egress fees for moving raw telemetry across cloud zones or to central stores are eliminated. Retention and indexing infrastructure can be sized for compliance and debugging, not real-time detection throughput.

Detection scales horizontally with the fleet. There is no central ingestion bottleneck to rightsize as infrastructure grows. Expensive instrumentation (profiling, detailed tracing, packet capture) can be activated conditionally, targeted to specific workloads where the engine has already identified a signal, rather than running everywhere as permanent overhead.

The teams that feel the difference most directly are the ones who've watched their observability bill scale faster than their engineering headcount, and who've accepted gaps in their security coverage because full-fidelity host monitoring was simply too expensive.

The Schema Behind the Engine

The detection engine is one layer of what Prequel calls Common Risk Enumerations (CREs), a structured standard for known failure modes in distributed systems, modeled on the CVE framework in security. Each detection rule encodes not just detection logic but root cause, business impact, and remediation guidance. Rules are intelligent by design: they support scripting to execute complex operations on match, whether that means triggering a remediation workflow, enriching the signal with additional context, or conditionally suppressing noise based on runtime state.

When the engine fires, the output isn't a raw alert that requires reconstruction. It's a complete picture of what happened, why, and what to do, delivered at the moment of detection, not at the end of a postmortem.

Prequel maintains a growing library of rules covering Kafka, RabbitMQ, Nginx, Prometheus, Kubernetes, Istio, ArgoCD, Vault, PostgreSQL, and dozens more systems. Organizations can also author custom rules using the same framework, with the same distribution and versioning guarantees.

The distributed engine is what makes it practical to run hundreds of these rules continuously across a production fleet, without an expensive data pipeline in front of them. The intelligence runs where your data already lives. The signal comes to you.

.svg)